News

- 04/2026: Visited UC San Diego, UC Berkeley, and Stanford for invited talks

- 01/2026: A paper on outdoor semantic navigation, RAVEN, was accepted to ICRA 2026. See you at Vienna!

- 09/2025: I started research internship at Field AI.

- 06/2025: Two papers, RayFronts and PIPE Planner, were accepted to IROS 2025.

- 05/2025: Attended ICRA 2025 to present MapEx. Also participated in ICRA 2025 Doctoral Consortium.

- 12/2024: I passed my PhD Thesis Proposal! You can watch the recording of my presentation here.

- 05/2024: Attended ICRA 2024 and presented my work on multi-robot exploration.

- 12/2023: Survey on foundation models and robotics was released. Check out here!

- 11/2023: Attended CoRL 2023. Here are the notes on the sessions that I joined.

- 07/2022: Two papers on few-shot object detection were accepted at ECCV 2022 and IROS 2022.

- 09/2020: Started PhD in Robotics at Carnegie Mellon University!

|

Invited Talks

-

3D Representations for Robotics: Geometry, Efficiency, and Semantics

Guest Lecture, CS479: Machine Learning for 3D Data @ KAIST (hosted by Minhyuk Sung, May 2026)

-

Predictive Semantic World Models for Long-Horizon Mobile Robot Autonomy

CogAI Group @ Stanford (hosted by Jiajun Wu, Apr 2026)

-

Predictive Semantic Foresight for Mobile Robot Autonomy

Kanazawa AI Research Lab @ UC Berkeley (hosted by Angjoo Kanazawa, Apr 2026)

Co-PI Seminar on Optimization, Control, and Learning @ UCSD (hosted by Nikolay Atanasov, Apr 2026)

Interactive and Trustworthy Robotics Lab @ CMU (hosted by Andrea Bajcsy, Apr 2026)

-

Predictive Mapping and Semantic Reasoning for Autonomous Mobile Robots

Scalable Spatial Intelligence Lab @ U Michigan (hosted by Yulun Tian, Mar 2026)

Robot Learning Seminar @ U Buffalo / Georgia Tech / Penn State (hosted by Chen Wang, Mar 2026)

-

Spatial Reasoning and Semantic Representations for Intelligent Multi-Robot Exploration and Navigation

Artificial Intelligence for Robot Coordination at Scale Lab @ CMU (hosted by Jiaoyang Li, Jul 2025)

Resilient Intelligent Systems Lab @ CMU (hosted by Wennie Tabib, Nov 2024)

|

|

SuperMap: A Spatio-Temporal SLAM System for Visual-Language Navigation

Shibo Zhao*, Guofei Chen*, Honghao Zhu, Zhiheng Li, Changwei Yao, Nader Zantout,

Seungchan Kim, Wenshan Wang, Ji Zhang, Sebastian Scherer

Robotics: Science and Systems (RSS) 2026

|

|

PRoID: Predicted Rate of Information Delivery in Multi-Robot Exploration and Relaying

Seungchan Kim, Seungjae Baek, Micah Corah, Graeme Best, Brady Moon, Sebastian Scherer

arXiv preprint arXiv:2604.10433 (2026). Under Review

paper |

arXiv

|

|

RADSeg: Unleasing Parameter and Compute Efficient Zero-Shot Open-Vocabulary Segmentation Using Agglomerative Models

Omar Alama*, Darshil Jariwala*, Avigyan Bhattacharya*, Seungchan Kim, Wenshan Wang, Sebastian Scherer

IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2026 Findings

CVPR 2026 Workshop on Open-World 3D Scene Understanding and Representations

paper |

arXiv |

project page |

code

|

|

RAVEN: Resilient Aerial Navigation via Open-Set Semantic Memory and Behavior Adaptation

Seungchan Kim, Omar Alama, Dmytro Kurdydyk, John Keller, Nikhil Keetha, Wenshan Wang, Yonatan Bisk, Sebastian Scherer

IEEE International Conference on Robotics and Automation (ICRA) 2026

(Oral Presentation, 8.3% of Accepted Papers)

IROS 2025 Active Perception Workshop, Best Paper Finalist (Spotlight Presentation)

paper |

arXiv |

project page |

code |

video |

presentation video

|

|

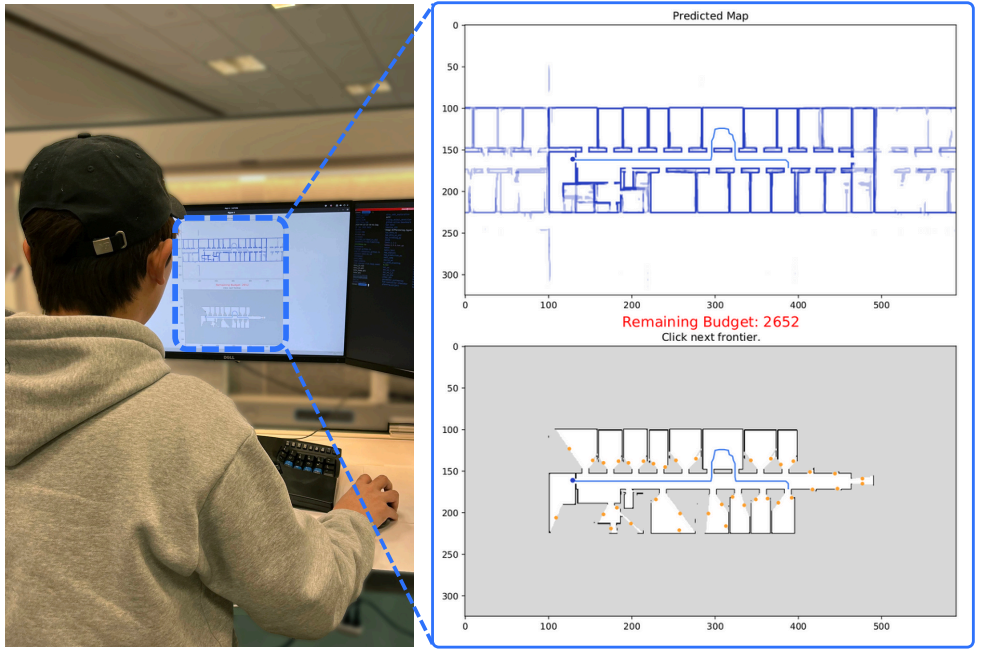

MapExRL: Human-Inspired Indoor Exploration with Predicted Environment Context and Reinforcement Learning

Narek Harutyunyan*, Brady Moon*, Seungchan Kim, Cherie Ho, Adam Hung, Sebastian Scherer

IEEE International Conference on Advanced Robotics (ICAR) 2025

ICRA 2025 Workshop on Structured Learning for Efficient, Reliable, and Transparent Robots

paper |

arXiv |

project page |

DOI |

video

|

|

RayFronts: Open-Set Semantic Ray Frontiers for Online Scene Understanding and Exploration

Omar Alama, Avigyan Bhattacharya, Haoyang He, Seungchan Kim, Yuheng Qiu, Wenshan Wang, Cherie Ho, Nikhil Keetha, Sebastian Scherer

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) 2025

RSS 2025 Workshop on Semantic Reasoning and Goal Understanding in Robotics

RSS 2025 Workshop on Learned Robot Representations

paper |

arXiv |

project page |

code |

DOI |

video

|

|

PIPE Planner: Pathwise Information Gain with Map Predictions for Indoor Robot Exploration

Seungjae Baek*, Brady Moon*, Seungchan Kim*, Muqing Cao, Cherie Ho, Sebastian Scherer, Jeong hwan Jeon

(*: Equal Contributions)

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) 2025

paper |

arXiv |

project page |

code |

DOI |

video

|

|

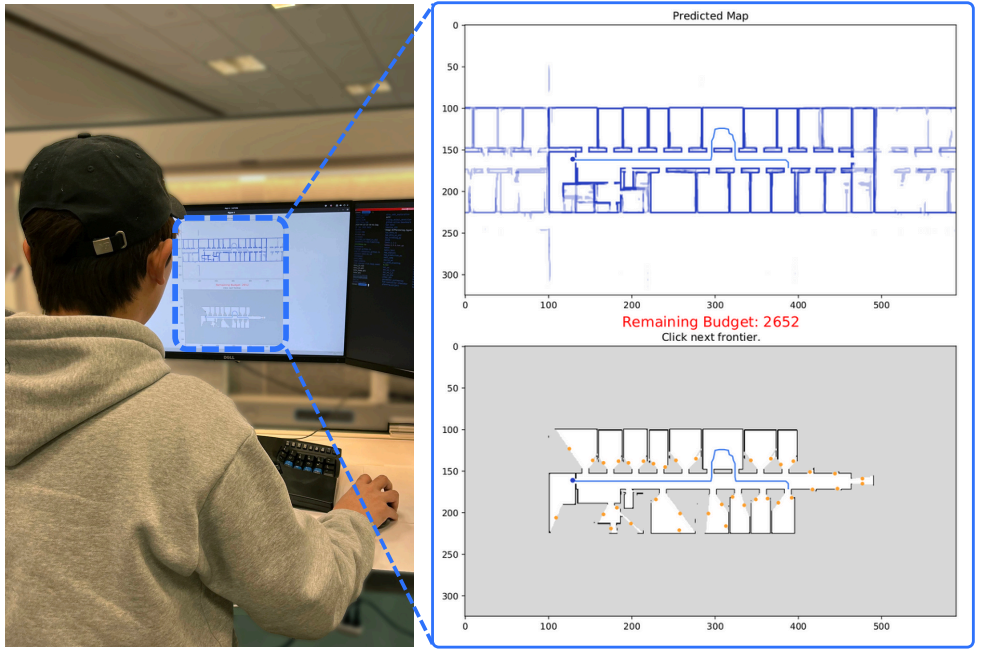

MapEx: Indoor Structure Exploration with Probabilistic Information Gain from Global Map Predictions

Cherie Ho*, Seungchan Kim*, Brady Moon, Aditya Parandekar, Narek Harutyunyan, Chen Wang, Katia Sycara, Graeme Best, Sebastian Scherer

(*: Equal Contributions)

IEEE International Conference on Robotics and Automation (ICRA) 2025

paper |

arXiv |

project page |

code |

DOI |

video |

presentation video

|

|

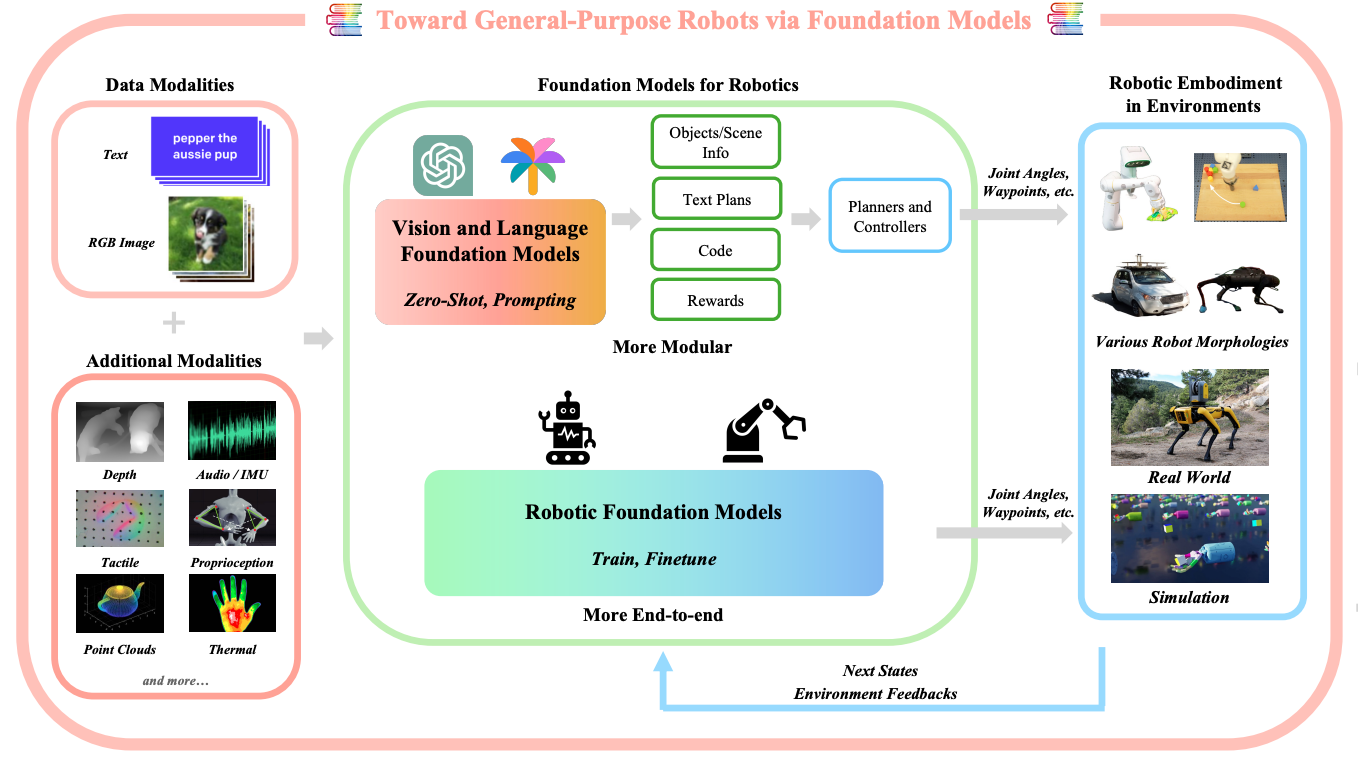

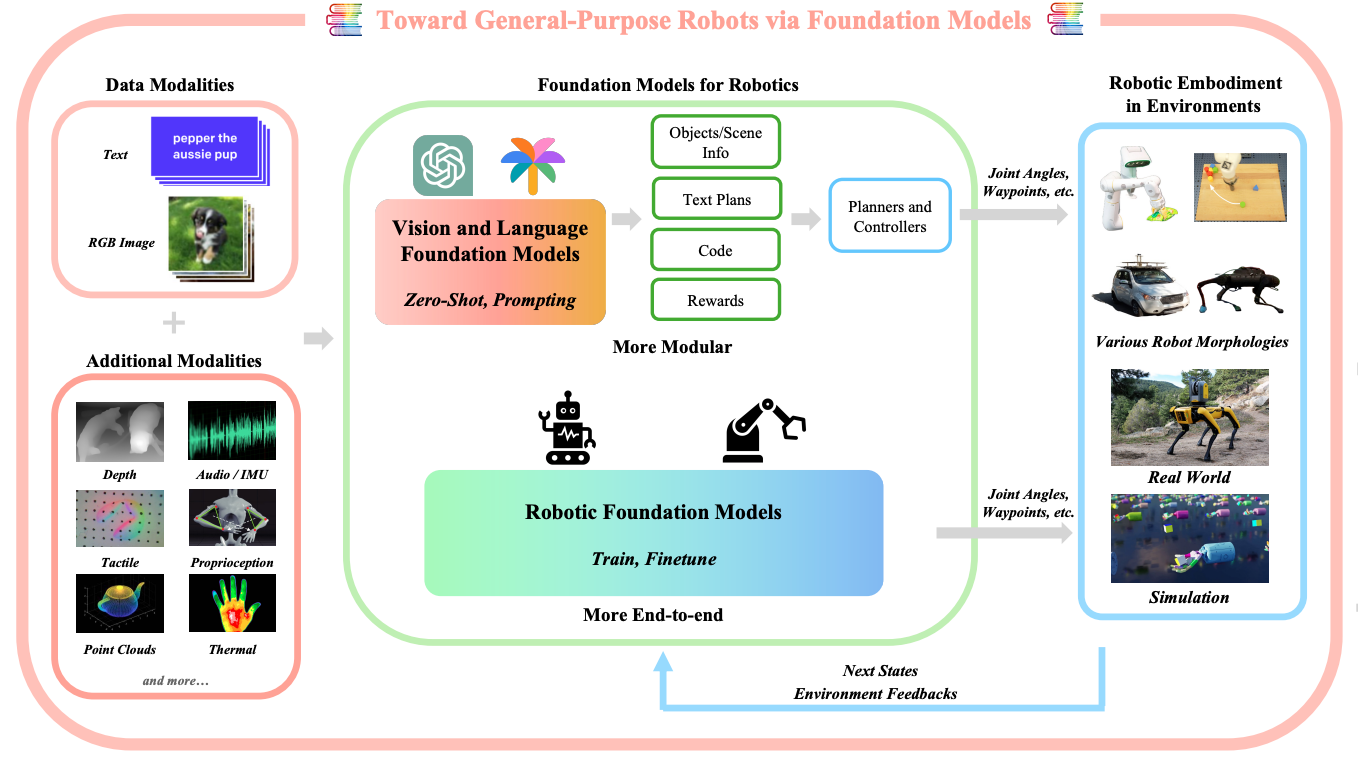

Toward General-Purpose Robots via Foundation Models: A Survey and Meta-Analysis

Yafei Hu*, Quanting Xie*, Vidhi Jain*, Jonathan Francis, Jay Patrikar, Nikhil Keetha, Seungchan Kim, Yaqi Xie, Tianyi Zhang, Hao-Shu Fang, Shibo Zhao, Shayegan Omidshafiei, Dong-Ki Kim, Ali-akbar Agha-mohammadi, Katia Sycara, Matthew Johnson-Roberson, Dhruv Batra, Xiaolong Wang, Sebastian Scherer, Chen Wang, Zsolt Kira, Fei Xia, Yonatan Bisk

arXiv preprint arXiv:2312.08782 (2023).

paper |

arXiv |

project page

|

|

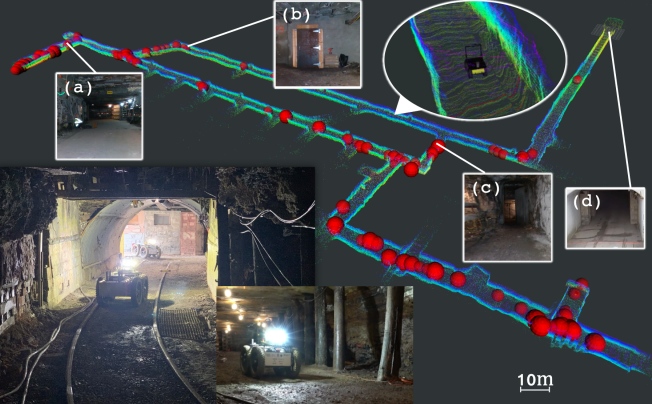

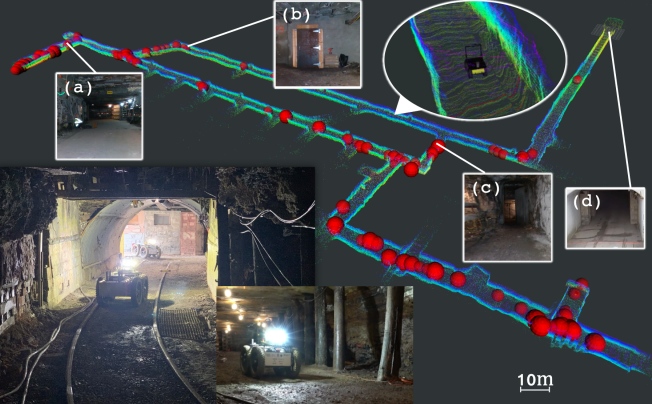

Multi-Robot Multi-Room Exploration with Geometric Cue Extraction and Circular Decomposition

Seungchan Kim, Micah Corah, John Keller, Graeme Best, Sebastian Scherer

IEEE Robotics and Automation Letters (RA-L) 2023

Presentation at IEEE International Conference on Robotics and Automation (ICRA) 2024

paper |

arXiv |

project page |

DOI |

video |

presentation video

|

|

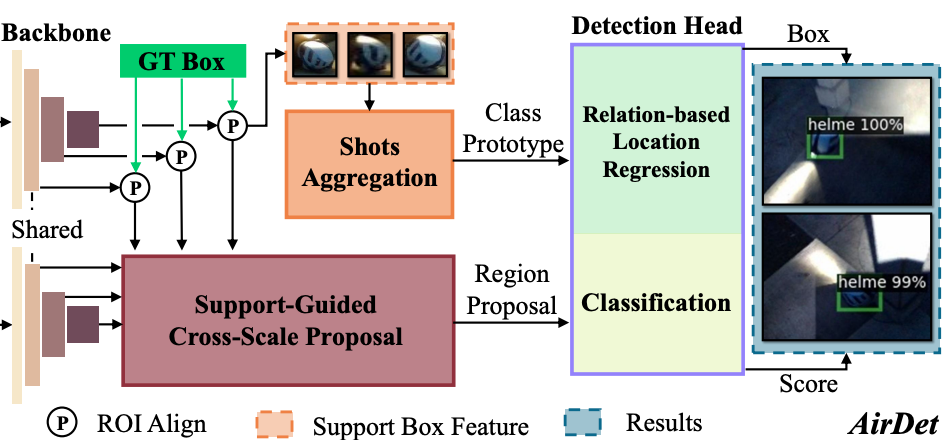

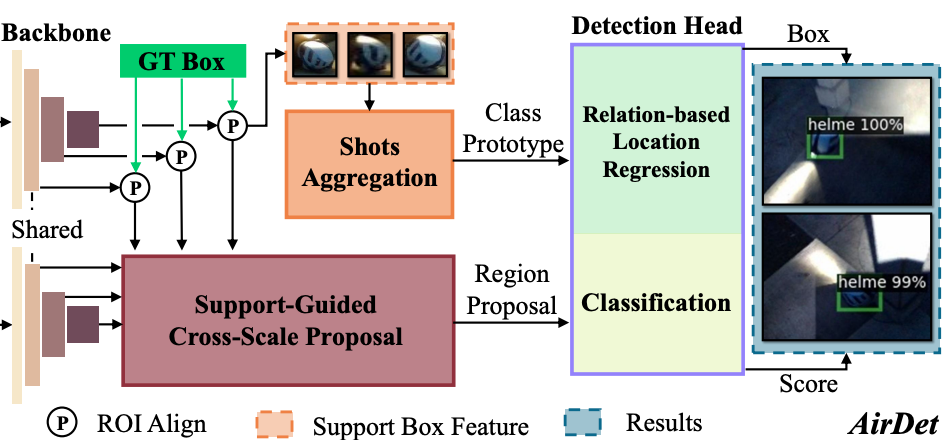

AirDet: Few-Shot Detection without Fine-tuning for Autonomous Exploration

Bowen Li, Chen Wang, Pranay Reddy, Seungchan Kim, Sebastian Scherer

European Conference on Computer Vision (ECCV) 2022

paper |

arXiv |

project page |

code |

DOI

|

|

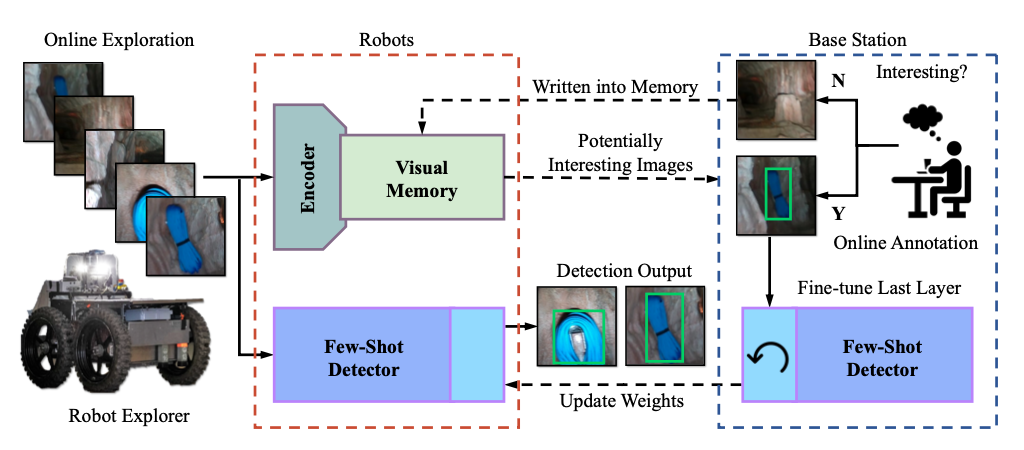

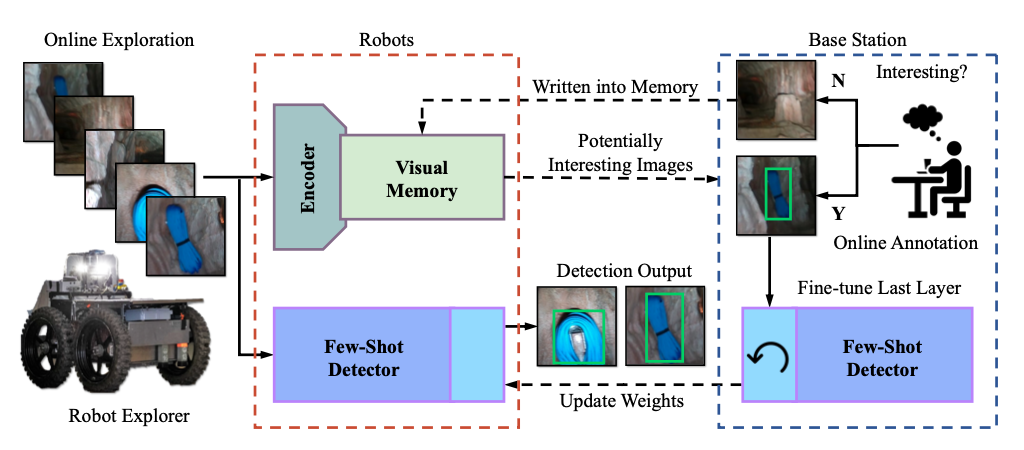

Robotic Interestingness via Human-Informed Few-Shot Object Detection

Seungchan Kim, Chen Wang, Bowen Li, Sebastian Scherer

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) 2022

paper | arXiv | DOI | video

|

|

Unsupervised Online Learning for Robotic Interestingness with Visual Memory

Chen Wang, Yuheng Qiu, Wenshan Wang, Yafei Hu, Seungchan Kim, Sebastian Scherer

IEEE Transactions on Robotics (T-RO) 2021

paper | arXiv | project page | code | DOI

|

|

Using Computational Analysis of Behavior to Discover Developmental Change in Memory-Guided Attention Mechanisms in Childhood

Dima Amso, Lakshmi Govindarajan, Pankaj Gupta, Diego Placido, Heidi Baumgartner, Andrew Lynn, Kelley Gunther, Tarun Sharma, Vijay Veerabadran, Kalpit Thakkar, Seungchan Kim, Thomas Serre

PsyArXiv. doi:10.31234/osf.io/gq4rt

DOI

|

|

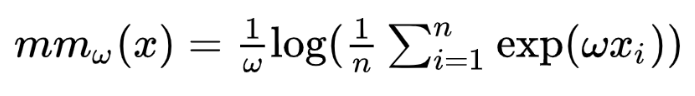

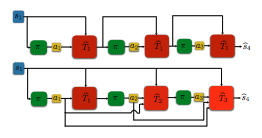

Combating the Compounding-Error Problem with a Multi-step Model

Kavosh Asadi, Dipendra Misra, Seungchan Kim, Michael Littman

arXiv preprint arXiv:1905.13320 (2019).

paper | arXiv

|

|

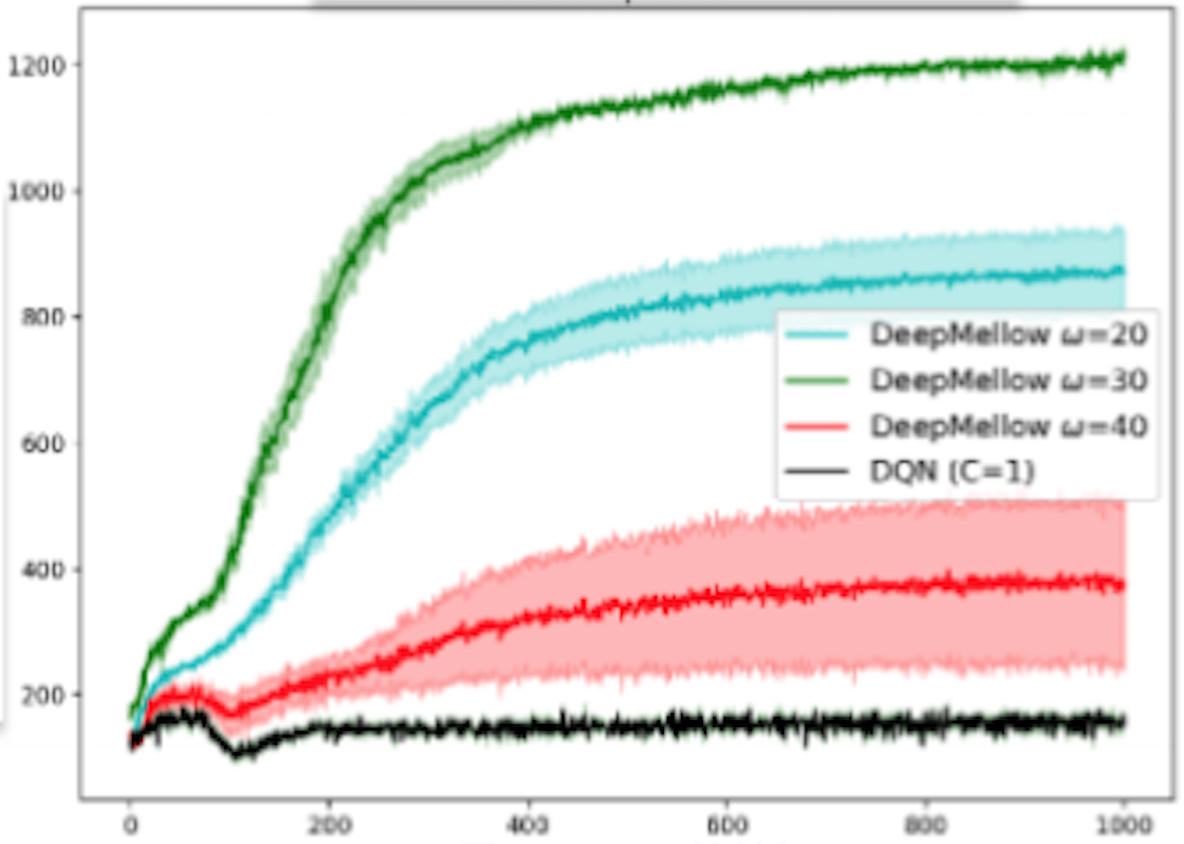

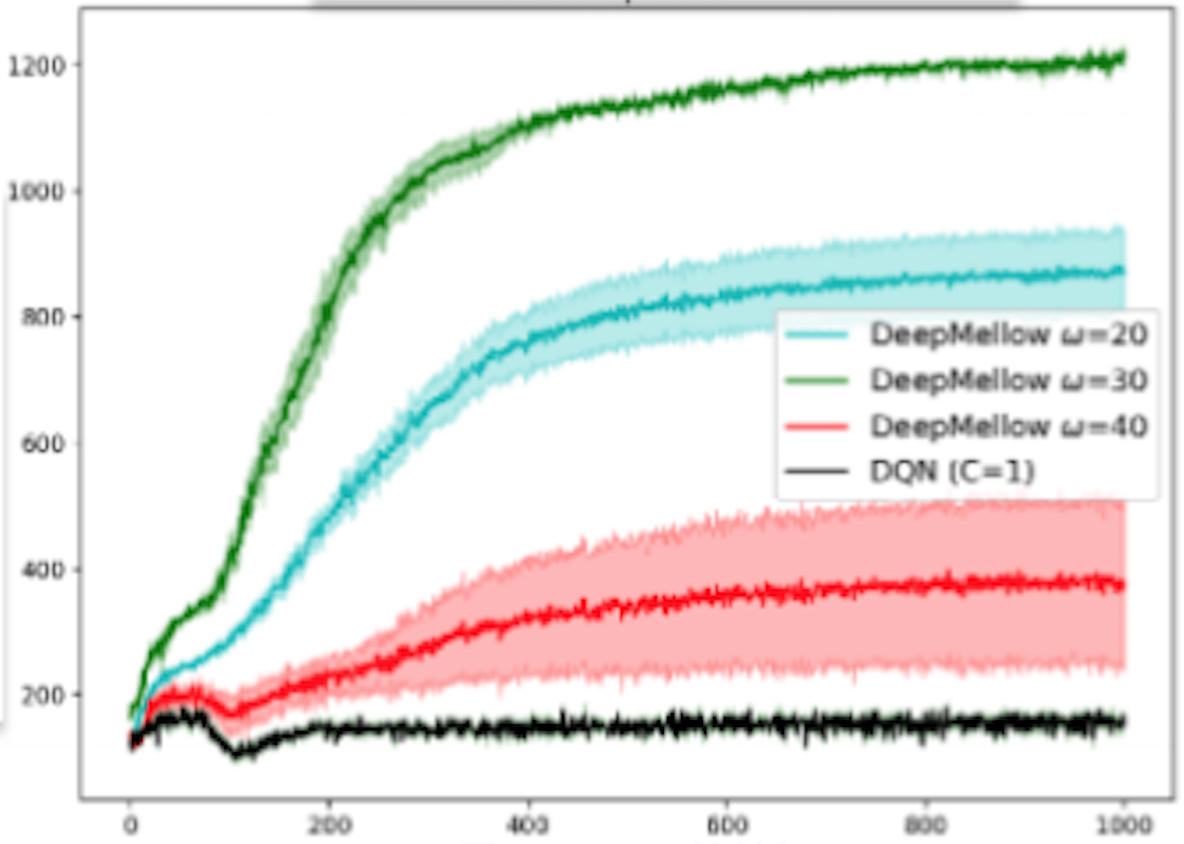

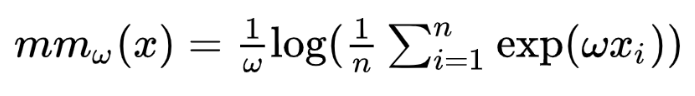

DeepMellow: Removing the Need for a Target Network in Deep Q-Learning

Seungchan Kim, Kavosh Asadi, Michael Littman, George Konidaris

International Joint Conference on Artificial Intelligence (IJCAI) 2019

Multidisciplinary Conference on Reinforcement Learning and Decision Making (RLDM) 2019

paper | code | DOI

|

|

Removing the Target Network from Deep Q-Networks with the Mellowmax Operator

Seungchan Kim, Kavosh Asadi, Michael Littman, George Konidaris

International Conference on Autonomous Agents and Multiagent Systems (AAMAS) 2019, Extended Abstract

paper

|

Carnegie Mellon University Robotics Institute

16-711 Kinematics, Dynamics, Control, Teaching Assistant, Spring 2023

16-833 Robot Localization and Mapping, Teaching Assistant, Spring 2022

Brown University

CSCI1430 Computer Vision, Teaching Assistant, Spring 2019

CSCI0040 Intro to Scientific Computing and Problem Solving, Teaching Assistant, Spring 2015

|

Organizer:

Reviewer: IJRR, T-ASE, RA-L, ICRA, IROS, CoRL, CASE, MRS, ICAR, ICLR, NeurIPS, AAAI, ICML

|

|